With all the hoopla surrounding high-frequency trading, it’s easy to lose sight of the fact that it is part of a much larger phenomenon, namely automated trading.

Automated trading, which as its name suggests is machine-driven and electronic, is the umbrella under which methodologies such as high-frequency, algorithmic, and quantitative reside. A common denominator is the need for heavy-duty number crunching, a complex task made more complex by the sheer volume of numbers at hand.

The explosion of ‘big data’ has affected all industries, but the financial markets have its own unique set of issues, such as the need to capture time-series data and merge it with real-time event processing systems. For many market participants, point-to-point speed isn’t necessarily a trump card.

“We see a fundamental shift in focus from low latency to big data,” said James McInnes, chief executive of trading-platform provider Embium. “It’s not about being fastest, it’s about being able to process tons of data. Lower trading volumes have forced people to trade multiple asset classes, which means more data to analyze.”

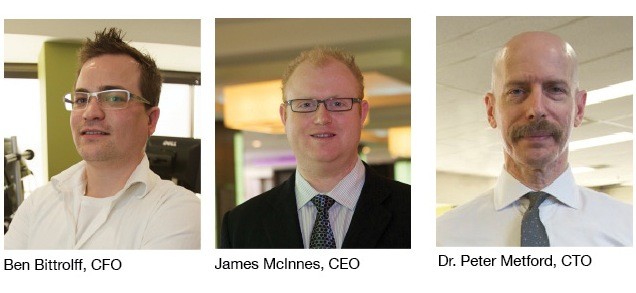

Embium, which is a sounded-out acronym of the first letters of the last names of its founders McInnes, chief financial officer Ben Bittrolff, and chief technology officer Dr. Peter Metford, recently changed its name from Cyborg Trading Systems and opened a New York office. The five-year-old company rebranded to highlight its approach to trading automation for institutional investors.

“We wanted our new corporate name, Embium, to convey our focus on servicing institutional customers and to better reflect the sophistication of our product offering,” said McInnes. “We have seen tremendous growth for cloud technology in the financial industry and opening an office at 90 Broad Street, New York will allow us to better meet this demand.”

The company’s foundation for automated trading was built around a desktop automation platform that enabled retail-level market participants to rapidly develop and deploy trading strategies. “We have since evolved the technology and in the process, created a new trading engine to effectively serve global financial institutions,” said McInnes. “Our focus has shifted to flexible strategy development and highly scalable, server-side automation solutions for low-latency executions.”

DIY

There is a do-it-yourself movement afoot that allows traders of all stripes to create algorithms and deploy them in live markets. This market democratization represents an advance as the methodology had been the exclusive realm of deep-pocketed market players, but it also creates risks.

“One of the biggest changes in the financial markets industry has been the deployment of drag-and-drop technology, which allows traders to put their own strategies into production,” said McInnes. A necessarily corollary of the deployment speed of modern algorithmic development is rigorous quality assurance and testing, he added.

The biggest challenges associated with software testing stem from deficiencies in the test process rather than the testing itself. “Software development and software testing are interdependent,” said Jonathan Leaver, vice president of automated trading at Embium. “You don’t want software engineering changes to cause something to break.”

Paying more attention to software quality at every stage of the lifecycle can decrease the cost of software development, as well as the time to market. By considering software quality issues before the quality-assurance phase, organizations can not only reduce the cost of quality, but also shorten the time to market or time to deployment because defects are caught earlier.

“We have built a collection of testing tools by which algorithms can be decomposed into building blocks and tested throughout their lifecycles,” Leaver said. “By breaking software into ‘Lego’ blocks, it’s possible to test them first individually and then as an integrated whole.”

Rigorous systems testing has gained importance in the eyes of market participants, especially after multiple highly publicized market disruptions over the past few years. Regulation Systems Compliance and Integrity, proposed by the U.S. Securities and Exchange Commission in March, is designed to ensure that core technology of national securities exchanges, alternative trading systems, clearing agencies, and plan processors meet certain standards and that these entities conduct business continuity testing with their members and participants.

Big Data holds implications for the future of trading, including algorithmic trading, strategy development, quantitative analysis sand post-trade analytics. “A lot of people talk about Big Data and don’t really understand what it is,” said Jamie Oschefski, vice president of accounts at Embium.

Big Data comes in different forms and allows for better visibility, faster analytical cycles and improved control over risk and credit exposure. “If you look across the 13 U.S. equity exchanges, nine options exchanges and 50 or so dark pools, there are 40 trillion records created per day, which equates to roughly 2 petabytes of data,” said Oschefski. “To put that into perspective, that is the same as storing the 12 billion photos on Facebook or roughly 26 years of HD video.”

The interconnectedness of financial markets is propelling a move away from single-asset trading platforms. In the futures industry, for example, traders need to understand the trends in price movements in underlying financial instruments.

“We see a fundamental shift in focus from low latency to Big Data. It’s not about being fastest, it’s about being able to process tons of data.” James McInnes, Embium chief executive

Embium aims to provide clients with technology that works with all asset classes to cope with the magnitude of data they deal with on a daily basis. “If I have a successful strategy trading on the E-Mini S&P futures, the value of having the ability to port that strategy to trade in the ETF market is huge,” said Oschefski. “This is another way for these firms to spread their risk and is certainly a paradigm shift.”

Embium specializes in the development of automated trading technology for global financial firms, including hedge funds, brokers, banks, exchanges and professional traders. Its technology is designed to streamline the development and implementation of sophisticated algorithmic strategies. Cloud Trader, Embium’s flagship multi-asset, broker-agnostic automation product, is built to enable rapid development, testing and deployment of algorithmic strategies through multiple brokers at multiple venues.

Strategy Streamliner

The Algorithm Development Kit, part of the Cloud Trader platform, provides full-fledged Application Programming Interface access to the framework for strategy development, as well as a customizable simulation environment in which the functionality, correctness and performance of algorithms can be tested.

As a ‘local sandbox test environment’, ADK provides a dedicated local development machine in order to test functionality throughout the development process, including debugging tools which provide full-code transparency.

“ADK streamlines the development and implementation of complex trading strategies,” said McInnes. “You only need to write unique, strategy-specific code, and our building block will handle everything else.”

For the past two decades, self-regulatory organizations have followed a voluntary set of principles articulated in the SEC’s Automation Review Policy and participated in what is known as the ARP Inspection Program. The SEC has noted that the continuing evolution of the securities markets to almost entirely electronic and highly dependent on sophisticated trading and other technology has posed challenges for the program.

Embium uses specialized proprietary algorithms, known as “Risk Wrappers,” that act as containers for risk, preventing trading algorithms from unintended consequences.

“The Risk Wrapper is a central part of our technology,” said McInnes. “Algorithm risk is very different from other types of risk. You need to look for errors in logic that will result in unwanted behavior. Risk Wrappers ensure that the amount executed on a trade does not exceed a predetermined limit, or that you’re not buying on the offer or selling on the bid more than X times per second.”

As an automated trading platform, Cloud Trader is split up into three parts. The first is the Cloud Trader Front End, a command-and-control system allows the quantitative trader to configure algorithms and to control the system as a whole. “We then have a centralized relay point, or hub, which is where all the data is stored, and it routes messages to co-located trading servers and then back to the Front End,” said McInnes.

The third component is the complex event processing engine, which resides in the co-located trading environment. “Typical use cases for CEP are processing data for trading or analyses,” McInnes said. “This is where we process the data, and produce an action.”

The CEP engine is designed to efficiently process exponential amounts of information through hardware optimization. “The engine runs entirely in-memory for latency optimization, resulting in low-latency trade executions regardless of the number and complexity of running algorithms,” said McInnes.

In addition to being maximally scalable to operate with large sets of data, algorithms need to be capable of being designed to change when market conditions change, something that most algorithms lack.

“We have built a collection of testing tools by which algorithms can be decomposed into building blocks and tested throughout their lifecycles.” Jonathan Leaver, vice president of automated trading at Embium

“A lot of the algorithmic strategies being developed today are hard-coded,” said McInnes. “Our differentiator is in allowing you to create a customizable strategy dynamically, and then deploy it through the cloud. The benefit of this approach is that you can write an algorithm once, run it against a live simulation and then deploy it globally. This is especially important as firms move towards trading multiple asset classes and geographies.”

Embium’s proprietary agent-based simulations use authentic market dynamics, including realistic fills and order queue. “We spent a lot of time looking at others’ simulation environments,” said McInnes. “When we went to build our own, we had the choice of either hooking into a market data feed or simulating market data with real people or artificial agents.”

The company chose the latter approach because a simulated environment can recreate the market’s reaction to a trading strategy, something that would be impossible to do with actual market data. “In low-latency trading, small market dynamics can make a big difference,” McInnes said. “Those market dynamics can be controlled and captured in a simulator.”

Embium uses a distributed system for a more holistic view of the market activity, which also makes data readily available for intraday analysis. “Leveraging the cloud to farm out computationally expensive activities is becoming more and more attractive,” Oschefski said. “The latency arms race is being led by only a few firms, and based on the discussions with our clients, the firms that can rationalize and normalize all this data will ultimately have the long-term competitive advantage.”

Embium “employs a significant amount of PhDs specializing in machine learning, artificial intelligence and data science to keep up with the innovative demands of our clients,” said Oschefski.

Added McInnes, “finance is a science problem now, not a business problem. In finance today, someone with a physics or computer science degree is more qualified than someone with an MBA.”